Agility? We tried it! Does not work! – Part 4

Table of Contents

Without planning and measurement, everything is a game of chance!

Without direct customer feedback no learning, no agility is possible

You simply use new metrics to continue to manage people

Without endurance and discipline you will quickly run out of breath

Tools don’t make you agile!

Agility can diversity!

Outlook

Why that can be true: An overview in several parts

“Agility for agility’s sake” is rarely a good idea in my experience. In general it is not a good sign when a model or a method becomes an end in itself, when it is no longer questioned, when nobody knows anymore why or for what one is doing something or has to do something in the same way. I was able to experience this with classic project management: project managers who shrug their shoulders after grandiose failed projects and say that they did everything right, followed all rules and processes, just like the PM manual says. Then, at the very latest, it should become apparent that something is going wrong.

In the first article of my small series on agility in companies, the main question was whether agility is the right approach for organisations at all and if so, which concrete approach could fit which situation. The second part was mainly about typical problems in the introduction and implementation of agility. In this third part, the focus was mainly on the people themselves. In this now already fourth episode, I would like to point out some dangerous misunderstandings.

All the more reason for me to say that this time again I will not be able to provide universal solutions or recipes for successful agility. The one or the other attentive reader may recognise that my arguments sometimes even contradict each other. Welcome to reality: ambiguity – according to experts – is a characteristic or a companion of complexity, in any case: unavoidable. And yet I am convinced that the inclined reader will be able to make sense of one or the other of my impulses.

And before we get started, I would like to point out once again that this article may also contain traces of irony!

You confuse agility with anarchy, part 1: Without planning and measurement, everything is a game of chance!

Probably a classic among the misunderstandings about agility: A few years ago, I often came across statements like “Agility, that’s the one with the colorful pieces of paper where everyone can do what they want without planning and without documentation, isn’t it?” Today I hear such statements less and less often, but – even worse – I see teams that unconsciously or for convenience actually behave in a similar way.

For example, I see Scrum teams that plan the next sprint, but at the end of the sprint they hardly ever measure the results consistently and do not seriously analyse any deviations.

Some of these teams would probably protest now: “Of course we’ll see if we have managed to create all the planned user stories. And it is quite normal that not everything goes as planned, after all we are dealing with complexity and uncertainty (see part 1). And in the retrospective we then define measures to get better.”

But – be honest – many teams remain rather superficial in their analysis of deviations and trust that together they will find the right things that are worth improving. Unfortunately, I rarely see teams that regularly check their Story Point estimates, seriously analyse deviations and, based on the findings, readjust their reference stories (if they have any and use them) so that they can estimate better in the future.

(Note: I do not want to go deeper into the sense and nonsense of estimation at this point. I can well understand the arguments of the #Noestimates movement. But I also see positive (side) effects of estimation, especially for the team. Maybe I will go into this in more detail in the next article.)

What I mean by “seriously” analysing: Taking the time together to find out the causes of identified problems and deviations first, instead of jumping directly into finding solutions. It sounds profane, but a common and really effective method is not called “5 Whys” instead of “Why” for nothing, because the actual cause is rarely found directly in the answer after the reason for a problem.

Without a “serious” cause analysis, the choice of improvement measures is nothing more than a game of chance with a limited probability of winning: In many cases, the spontaneously found measures only conceal the current symptoms, the actual causes remain untouched and will sooner or later cause new symptoms and thus limit the team’s productivity in the long run.

The estimation, planning and measurement of sprint results is just one example. The same is all too often true for the improvement measures themselves, which were decided upon in a sprint retrospective, for example: My observation is that it is not too common to determine directly at the definition of the measure how one can later determine whether the measure 1) was implemented and 2) had the desired effect or not. How often are measures decided on to solve a problem that everyone in the team thinks are great, you are in a good mood and put a mental hook behind the problem – only to be surprised later that the problem reappears …

But 1. planning and measuring means work and requires discipline. And 2. it is important that deviations from the plan (in complex/dynamic situations) are seen as normal and as learning opportunities. Under uncertainty, it is not about keeping to a plan as perfectly as possible, it is about learning and adapting as quickly as possible – either adapting the procedure or the plan, usually both! And the more uncertain and dynamic the environment or market in which you operate, the faster you can learn. The speed of learning is then decisive for success!

As a reminder, the Scrum Guide says quite at the beginning:

“Scrum is founded on empirical process control theory, or empiricism. Empiricism asserts that knowledge comes from experience and making decisions based on what is known. Scrum employs an iterative, incremental approach to optimize predictability and control risk. Three pillars uphold every implementation of empirical process control: transparency, inspection, and adaptation.”

And on Wikipedia empiricism is defined as follows:

“Empiricism (from ἐμπειρία empeiría ‘experience, experiential knowledge’) is a methodical and systematic collection of data. Even the knowledge gained from empirical data is sometimes called empiricism for short.”

You’re too preoccupied with yourself: Without direct customer feedback no learning, no agility is possible

One of my customers told me a few months ago that he (managing director of a B2C company) had invited his product owners to an innovation workshop. Everybody was very committed and creative and many exciting ideas came up in the first hours. Then he invited his product owners to go out on the street together to test the new ideas directly on potential customers. This was the trigger to become even more creative! However, the creative energy was used to find reasons why it wouldn’t make sense to go out on the street now. In fact, nobody took the chance on that day to get direct feedback from customers so early.

Granted: feedback in the sense of criticism can indeed be very painful. It can really hurt to learn that the customer doesn’t like the new feature as much as you do.

But 1. the feared pain in your own imagination is usually much worse than what actually happens. In reality it is not so easy to get honest and unvarnished feedback – you can read about it in the small but highly recommended book “The Mom Test“.

And 2. the more mature your idea is, the more intense the pain becomes. The fresher the idea and the less time, effort and heart and soul has gone into it, the faster the pain goes away. Conversely: If I nurture an idea for weeks and months in a quiet little room, then strong criticism can tear the ground from under my feet. I think Ash Maurya’s motto hits the nail on the head: “Love the Problem, Not Your Solution!” You fall in love with your own idea very quickly and then you like to close your eyes to reality, close your ears to criticism. Until at some point you start looking for a suitable problem for your own solution – an all too often impossible undertaking.

The good news: You can consciously break this vicious circle, you can simply jump into the cold water and expose yourself to criticism. The more often you do this, the less pain you feel. You get used to it. And even the opposite happens: Very often I see shining eyes after people have spoken directly to users for the first time!

Again, the higher the frequency of customer feedback, the lower the respective pain potential. Shorter iterations are therefore risk reduction.

Another example: I know of banks that update their systems on a maximum quarterly basis in order to be able to use a highly complex process to eliminate as many risks and side effects of the changes as possible by means of various integration tests. Prior to such a release, many of those involved are then quite excited every time – because if something does go wrong, then in the worst case, the work of a quarter in several teams is affected.

Barclaycard, on the other hand, with transactions worth £600 billion every day, a company that is determined to avoid downtime and serious errors, now updates its own systems several times a day. (Source: Jonathan Smart.)

This sounds highly risky, but it is exactly the opposite: the smaller the changes, the lower the potential for damage, and the faster and easier it is to undo a failed change without having to undo all other changes (and thus possibly even legally required changes) as well, as would be the case with a major quarterly release.

Some people might think that this sounds great, but that it requires a lot more – for example, modular software architecture, test automation, etc. pp. That is certainly true.

But whatever works: Get out of your ivory tower and talk directly to your customers and users. Or invite them to your premises, e.g. for a Sprint Review. The purpose of this Scrum event is “to review the product increment and adjust the Product Backlog if necessary.” [Scrum Guide] In order to fulfill this purpose there are among others: “important stakeholders” who are invited by the product owner. And who is more important than customers and users? At least if you want to develop the product in a truly customer-oriented way.

Unfortunately, I very rarely see real end users in the Sprint Review. Sometimes I do see clients and other stakeholders – mainly internal, less often external clients and stakeholders. Too often the Sprint Review is a purely internal event of the Scrum Team. How exactly should new insights for product development arise?

At this point I would like to repeat the core statement of the first section: Without planning and review it is difficult to gain new insights. As long as I remain in my own ivory tower I will not get any new insights if our plan to make users and customers happy with the new product and to land a sales hit will work out the same way. In the meantime, product development remains a game of chance. Some can afford it, many cannot.

You just can’t let go, part 2: You simply use new metrics to continue to manage people

The first section dealt with empirical data, planning/targeting and (control) measurement. This sounds familiar at first: Metrics, KPIs and other benchmarks existed before everyone started talking about “agility”. Correct. So we don’t need to change that much. Wrong. Well, let’s replace traditional metrics like “person days” with agile metrics like “story points”. Please don’t!

Agility is based – in addition to the empiricism emphasised in the previous two sections – on self-organisation and empowerment, among other things. And behind this lies a very specific image of mankind (see part 3): First of all, I assume that all participants are committed and give their best for the common goal. As a manager, my main task is to create the conditions that enable the teams to work successfully.

Key figures then belong where they are needed and can be interpreted. In other words, where you know the context so that you can make decisions. A good example is the “velocity i.e. the speed or throughput of a Scrum Team. A good Scrum Team wants to become more and more productive itself, i.e. achieve more outcome per sprint. To achieve this, they keep an eye on their own velocity, analyse any deviations downwards and take measures to become faster and better. Sometimes the causes for negative tendencies are beyond the team’s scope, then it needs support and turns to the responsible manager with a request for support.

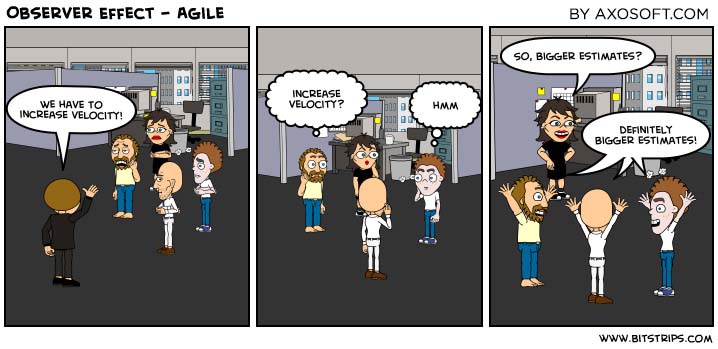

If, on the other hand, I continue to understand and live my role as a manager by the motto “Trust is good, control is better”, then I naturally ask for the current key figure “Velocity” after each sprint. If there are deviations downwards, I quickly give feedback that this is going in the wrong direction and demand an explanation. But I’m not really interested in excuses. After all, the team is agile and therefore fully responsible! So I slowly increase the pressure so that they don’t think that I’m just letting them get away with the fact that they’re getting lazy or careless and make it clear that I expect the velocity to increase again by the end of the quarter. What can happen then, the following little picture shows very nicely from my point of view:

The real problem here is not that the Scrum Team “hacks” the key figure reporting and thus deceives the manager, but the risks and side effects that come with it:

The important key figure “velocity” has thus become useless for the self-control of the team. Either it must now collect a second, real velocity for internal use only – which on the one hand creates additional effort and must also remain top secret – or the team simply leaves it at that: Why bother to get better and better yourself if all you get is distrust?

Control via key figures is therefore a very effective method to destroy intrinsic motivation and prevent agility!

If feedback plays a key role in the success of agile, the key to effective agile measurement is to understand measurement in the sense of feedback – not as a traditional means of leveraging certain behaviors. Feedback helps to improve your own performance. Levers are used to influence others. The difference lies therefore more in the way one uses measurement than in the measurement itself. [heise.de]

But as I wanted to express in the first two sections: empiricism, i.e. planning and measuring and learning from it, is an essential component of agility. So it would be the wrong way to demonise key performance indicators per se, just because you intuitively associate key performance indicators with “command & control” management, perhaps. All tools, models and principles, and thus also KPIs, are neutral at first; it always depends on how people actually use them.

And you can certainly use KPIs with an agile attitude:

In order to be able to interpret key figures correctly and thus use them as a basis for good decisions, you need to know the respective context. Decisions and key figures should remain as decentralised as possible. (The trick is then to identify nevertheless team-spanning patterns without falling back into “old” behaviour patterns yourself.) Some good key figures are therefore primarily catalysts for clarifying discussions.

Moreover, key figures are always only a means to an end, never an end in themselves. And since the world is changing and we are constantly learning together, it is part of a critical and agile attitude to constantly question whether key figures are still useful, no longer useful or even counterproductive.

You are confusing agility with anarchy, part 2: Without endurance and discipline you will quickly run out of breath

In the first paragraphs you could already read it between the lines: Agility thrives on the discipline of those involved! In my experience, it even requires more discipline than in the classic, at first glance strictly regulated project approach. At least in the classic projects, i.e. those with waterfall-like phases and milestones in which I was involved, there was meanwhile a lot of freedom in which one could work without a lot of pressure, even on topics that perhaps would not have been absolutely necessary without being constantly observed. Only at the end of the project or shortly before an important milestone or steering committee did things get hectic.

You may know this phenomenon as the “student syndrome” – Eliyahu M. Goldratt coined this term and, in the Critical Chain Project Management he designed, he proposes to delete the implicit buffers that make postponement and unnecessary work possible in the first place from the project plan and to place them explicitly at the end.

In Scrum you follow a similar path, not working towards a distant goal, but constantly (every 1-4 weeks) delivering something and checking if it works as desired or planned. It is not without reason that the Scrum Guide says that every sprint is to be understood as a (short) project. This creates a continuity of work. In addition, there is maximum transparency and close cooperation within the team. The result: There is simply no time for unplanned extra tasks, extra sausages, golden taps or just dawdling around. And if someone does try, it is usually quickly noticed within the team and (hopefully and at the latest) tackled in the next joint retrospective.

In my experience, informal social pressure in self-organised teams has a much stronger effect on discipline than a formal project manager ever could. (And this can be seen quite critically, but I don’t want to discuss this further here and now).

By the way, loafing around and free experimentation is not bad per se, it is even a good prerequisite for innovation. It is always important to find a good balance and, above all, to create transparency to make trust possible. Hackathons, barcamps, marketplaces of the makers, design sprints or slack time are ways to explicitly allow time for free innovation and exchange.

But back to the seemingly agile reality: If everyone does what he or she wants to do, if a large part of the team shows up late for meetings, if the Scrum events are constantly postponed and the topics are mixed up, if sprints are extended because one has not quite finished, if agreements within the team are not kept, then this has nothing much to do with agility. But if you are somehow successful with it: wonderful! But if you don’t succeed in this way, then you shouldn’t blame the supposed agility for it.

But the Agile Manifesto says that it is more important to react to changes than to follow a plan, doesn’t it? That is correct! But if it means throwing everything overboard every 5 minutes and re-planning, then it’s simply very unlikely that there will ever be a result to satisfy the customer.

Agility does not mean to stop thinking for yourself! On the contrary!

Scrum uses the sprint as a stability factor: the sprint is protected, only in exceptional cases a sprint is aborted when it has become completely obsolete. The sprint length is then always a compromise between “having to react quickly to changes” and “being able to work in peace”. If it does not seem possible to allow yourself at least 1 week planning security, then Scrum is probably no longer the appropriate framework, then it might be better to build on a Kanban model.

Perhaps agility can also be described that way: It is about reacting as quickly as possible to changes in user behavior and to changing customer demands. And to enable this maximum flexibility in working on the product, the highest possible stability, reliability and discipline in teamwork are required. By eliminating the need to think about roles, meetings and other arrangements for collaboration during implementation, you can focus fully on the product and customer needs.

And here, too, the rule is: Don’t overdo it! There is not only black and white! Because if you reflect too seldom on teamwork and adapt it to changing conditions, the team will eventually collapse or fall apart.

In essence, as always, it comes down to finding a healthy balance. In both dimensions (work on the product and cooperation in the team) compromises are needed to remain productive. Changes in product development that are too fast are at the expense of productivity, as are changes in teamwork that are too slow.

You just can’t let go, part 3: No! Tools don’t make you agile! Sorry, they don’t!

Wonderful, you have weighed all the options, started the first agile pilots and came to the conclusion: Agility is our way!

And how often do I observe the typical reflex that was trained for decades: Which tool do we introduce for this?

After all, how can a change in structures, processes, roles, procedures, communication patterns, etc. be successful without adequate tool support? Every change brings new tools. And vice versa.

I can relate to that very well. After all, I once introduced powerful IT systems for project management and multi-project management. I was convinced that these tools were at least important and useful, and in some cases even absolutely necessary prerequisites for successful project work.

Until I learned in 2011 at the first PM Camp in Dornbirn in the session “Des Agilen Pudels Kern” (The Agile Core) ” by Eberhard Huber that after evaluation of more than 300 IT projects the use of “heavy PM tools” has statistically seen minimal negative and of “communication tools” minimal positive effects on project success. The use of powerful PM systems, as I introduced them to customers at that time, causes only minimal damage! This only reassured me to a limited extent.

It is not for nothing that the Agile Manifesto says: Individuals and interaction before processes and tools. After all, it is people working together that develop successful products. Processes and tools can and should help people, but are never an end in themselves.

But now back to the initial situation: We want to work agile as quickly as possible and as successfully as possible. Do we really not need a special tool for this?

Focusing on the tool right at the beginning is risky in many ways: You suggest that the new tool is a necessary prerequisite for agility. So some people will wait until the tool is ready. And the upcoming change is probably not so special and profound – when the new CRM system was introduced, hardly anything else changed …

So why not start with what is available? A free space and a large stock of sticky notes and good pens are usually enough to get a team started. Or you can use the IT systems that are already there. If at some point the process has reached a certain stability and the call for better tool support comes up: Wonderful!

Another trap that one then all too readily falls into is to define the tool that has proven valuable to a team as a uniform standard for everyone. Sure, that is efficient. And I understand the reflex to avoid a proliferation of different tools (also for security reasons). And if a standard solution has already been agreed upon in house, then it does make sense to standardise its use. After all, with identical processes and rules, it is easier to switch from one team to another, among other things. And with uniform handling it is also much easier to understand and compare the figures of the individual teams.

Wait: Comparing numbers of different teams with each other? My alarm bells are ringing! Not for you? Perhaps you’d better read the section on key figures above again?

In any case, there is a great danger that the new “agile” tool will quickly degenerate into a compulsion and an end in itself and will no longer be useful, at least not for everyone.

Looking back: Back when I was still introducing PM systems, I saw many powerful PM tools in companies that had degenerated into a pure time recording system. Originally, the intention was to use them to support project work. And while it’s there, you can also use it to record times. So that the recorded times can be directly processed further, e.g. for internal activity allocation or for invoicing customers, certain structures must be adhered to. And thus the tool for planning and controlling most projects was no longer applicable. So every project again had to find its own solution. Whether the investment in the powerful PM system was worth it in the end, I dare to doubt – there would probably have been cheaper (and better) solutions for time recording.

But that is not the case today? Recently I have heard more and more from “agile” companies that they moan about the ever increasing bureaucracy when using the “agile” tool.

A tool does not make an organisation agile. In the best case it can support agile practices (“doing agile”). The prerequisite for this is that the actual users can benefit from it and adapt it to their changing needs. In the worst case, the tool leads to the fact that you cannot get beyond some of the agile practices anchored in the tool, that you play agile theatre, that you have no chance at all to develop an agile attitude (“being agile”)!

By the way, I recently observed something like the opposite effect: If you preach like a prayer wheel that the best way to communicate is face to face in the same room, then you shouldn’t be too surprised if teams get the idea that a retrospective via video conferencing doesn’t make sense.

They want diversity and at the same time demand that everyone should now be open, extroverted team players: Agility can diversity!

They want diversity and at the same time demand that everyone should now be open, extroverted team players: Agility can diversity!

The inclined reader will have recognised a pattern in the previous sections: Exaggerations and extremes are dangerous! In my opinion, agility is above all common sense, reacting to changes and weighing things wisely.

All the more I am always perplexed when people are judged in the name of agility: “He simply can’t do it! She’s just not a team player!” Or something like that.

First of all, I would like to refer once again to the agile view of human beings, which I discussed in more detail in the third part. On this basis, I find such sweeping judgements difficult. Maybe it is even true in one case or another: But to speak such a judgement about another person is personally difficult for me, evidently not so much for some others.

In the description of agility, the team is usually highlighted and (in contrast to management approaches) it is emphasised that there are no titles and no formal hierarchy in the team. However, it is wrong and dangerous to deduce from this that everyone in the team is or should be the same.

In fact the opposite is true! As already briefly mentioned in part 2 of my article series: The more complex the situation, the more complex the solution system should be. (Freely adapted from W. Ross Ashby.) This means that if we assume a complex/dynamic situation as the starting point (see Part 1), we should ensure that the solution system is as complex as possible, in other words, as diverse as possible!

And diversity does not mean: uniformity! It means differences: different CVs and education, different cultures, different perspectives.

In my opinion, this also means that it is okay if, for example, someone in the team is rather introverted and does not become active himself in the retrospectives to present his own points. It is then the task of the team and especially of the coach or Scrum Master to find or offer ways for each person to contribute in his or her own way.

It is also ok at first if someone has only limited interest in the work of his colleagues and prefers to work on a complicated algorithm all by himself. This does not make him or her a worse person.

And yes: maybe someone really doesn’t fit into the team. Because that would be too big an adjustment for everyone involved. It can happen. But maybe together you can find a constellation that suits everyone? Maybe a kind of satellite to the team? Jumper between several teams? Or even a special position for tasks that are even better to work on alone than in a team?

The agile values and principles that come to my mind spontaneously are

- openness,

- self-organisation and

- respect!

And sometimes it takes courage to find new solutions in order to preserve individuality and at the same time make it useful for the team!

Outlook

This time again the article has become much more detailed than I had planned. I hope I was able to give you some hints in this fourth part of my little series about what might be the reason why agility might not work for you the way you want it to. And maybe you have also found one or two helpful impulses for what you could perhaps do differently.

And by the way: I see light at the end of the tunnel. But another article is definitely coming. Be curious.

Notes:

And here you will find additional parts of articles on Agility? We tried it! Does not work!

Heiko Bartlog

Heiko Bartlog has more than 25 years of experience in projects, as a consultant, trainer, coach and entrepreneur, covering many different areas. As a ‘host for co-creation’, he supports organisations on their path to good cooperation and successful development. His repertoire includes approaches such as Scrum, Effectuation, Lean Startup, Management 3.0 and Liberating Structures. As a ‘mentor for mental fitness and vitality,’ he supports leaders in unlocking their potential.