What is False Positive?

Smartpedia: A false positive is the result of a check which wrongly (false) identifies a (positive) match of defined criteria.

False alarm

A fire detector that goes off without a fire. A pregnancy test that indicates pregnancy even though there is no pregnancy. An email that is detected as spam, even though it is a message from a business partner. There are numerous examples of so-called false positives. A false positive is when a check or test wrongly (therefore: false) detects a – mostly binary – match of criteria (therefore: positive) although this match is not given. Often in such a case one speaks of “false alarm”.

Causes for false positives

As there are many examples of false positives, there can also be many concrete causes. Of course, there is a difference between whether a doctor identifies an anomaly during an ultrasound examination, which is classified as harmless during a follow-up examination, whether the motion detector activates the light in the garden because a cat scurries across the property, or whether the silence of the employees in a company decision is interpreted as approval.

There could be the following reasons for false positives:

- Error. The simplest reason is certainly an error in the check. Example: Body scanners at the airport indicate a “site to be examined”, although there are no (metallic) objects there.

- False comparison values. Example: A requirement has changed in the course of a development, the implementation has been adapted, but the test case has not. If the implementation is now tested against an obsolete comparison value, the test must show an error.

- Incorrect comparison periods. Example: Google Analytics – a tool used to measure the visit of web pages – sends a report that indicates a significant reduction of web page visitors. The report compares weekly visitors, but does not take into account that it is normal during the Christmas week that there are fewer visits to a B2B website than in the week before Christmas. So it is not an anomaly, it is the norm.

- Warnings. Example: The German Federal Office for Information Security ( Bundesamt für Sicherheit in der Informationstechnik, BSI) warns against malware that may be pre-installed on Chinese mobile phones.

- Missing features. Example: If a manufacturer certificate is missing, anti-virus software prevents a file downloaded from the Internet from being installed.

- Existing properties. Example: A spam filter checks whether a word is contained in an email to be delivered and refuses delivery if it detects this word – e.g. “offer” in the subject. The spam filter ignores whether the mail is a request from a potential customer. Similarly, if links or attachments appear in the e-mail.

What would the anti-virus software do if it knew that it was a prototype to be installed from within the company? What would the spam filter do if it knew that the e-mail with a link came from the private mail account of its own managing director? Often the tools that compare values and time periods or check properties for their existence or non-existence lack logic (and/or knowledge). And this is probably the most common reason for a false positive.

The opposite of false positive

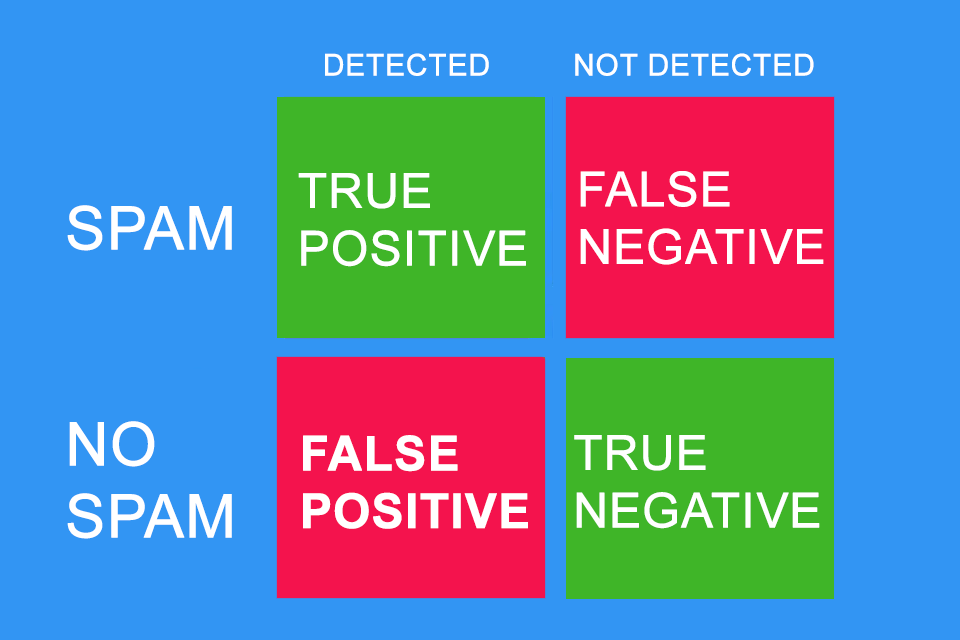

What is the opposite of false positive? It can be easily explained using the example of a spam filter.

- False positive: An e-mail is falsely declared as spam and ends up in the spam folder.

And what is the opposite?

- True Positive: An e-mail is rightly identified as spam and ends up in the spam folder.

- False Negative: An e-mail does not end up in the spam filter but in the inbox. It is wrongly classified as normal e-mail.

- True Negative: A “normal” mail is delivered to the inbox.

You can also imagine the whole thing as a quadrant:

- “Precison” and

- “Recall”.

While “Precision” answers the question “How many of the items found are relevant?”, “Recall” provides the answer to the question “How many relevant items were found?”. So on the one hand it is about the percentage of relevant items and on the other hand about the completeness of the relevant items.

This can be expressed in formulas as follows:

Precision = True Positive / (True Positive + False Positive).

Alternatively, one could also say: Precision = True Positive / Actual Results

Recall = True Positive / (True Positive + Relevant Elements).

Alternatively, one could also say: Recall = True Positive / Predicted Results.

Both values can be combined to the so-called F1-Score: F1-Score = 2 *((Precision * Recall)/(Precision + Recall)).

Example: Imagine Google delivers 1,000 answers to a search query, of which only 200 are relevant. However, 600 other answers that are relevant are not displayed. This results in: Precision = 200/1,000 = 1/5 and Recall = 200/800 = 1/4.

The consequences of a false positive

Similar to the number of examples and the number of reasons, there are numerous consequences of the false positive. It may not be a bad thing if a mail ends up in the spam filter by mistake, but it might cost you a new customer. If a software test identifies an error, it is important to correct it. Since a false positive identifies an error which in reality does not exist, only the test would have to be adjusted so that the test case runs through without error message during the next test run. Worse would be a false negative, i.e. a “successful” test that overlooks an error.

Both false positives and false negatives increase the effort in organisations, but the consequences of a false negative are often much more threatening. This is where better test plans, test cases and test environments promise to help.

In practice, many small functions have proven to be effective in limiting the consequences of a false positive:

- The spam filter does not delete a mail directly, but moves it into a “quarantine”.

- The anti-virus software allows installation “at your own risk”.

- Banks send information via text message to account holders to draw attention to account movements.

- Cloud providers send notifications if there is a logon to the cloud from an “unknown” device.

- …

The only way to stop the fire detector is to remove the batteries from the product. 😉

Notes:

Interestingly, False Positives are often referred to in the context of devices (e.g. fire detectors) or software (e.g. spam filters), but they are also easy to detect in real life. For example, if you visit the BSI, you have to hand in your smartphone at the reception, so that you can’t take photos on site and acquire “sensitive” information. You probably didn’t want to take any photos or steal any information, but the principle “safety first” is more important for an authority like the BSI than how you feel when you hand in your phone. In this case, with your mobile phone you are a false positive.

Here you can find more information about the F1 score F1 score.

Here you can find additional information from our t2informatik Blog: