Remote sprint review in Large-Scale Scrum (LeSS)

Expand the table of contents

Integrating users and customers in product development has been a core pillar of the agile movement. Two of the 4 agile values state “Individuals and interactions over processes and tools” and “Customer collaboration over contract negotiation”. In Scrum, one of the most popular agile frameworks for product development, the Sprint Review is the only “official” event where developers and users/customers interact and collaborate. This article gives an overview of the purpose of the sprint review in Scrum and describes a real live example of how a modified sprint review can be designed in Large-Scale Scrum (LeSS) with multiple teams working remotely.

The purpose of the sprint review

At the heart of Scrum is empirical process control, which distinguishes it from other agile frameworks. Instead of having a detailed defined process, Scrum uses short cycles for creating small shippable product slices, and after each cycle, it allows to inspect the product as well as the process and adapt both as necessary. The sprint review allows for the continuous inspection and adaptation of the product while the sprint retrospective does that for the process.

In the sprint review, the users/customers and other stakeholders inspect with the Product Owner and team(s) the product increment, and the product backlog is adapted as necessary. This is done by allowing everyone to hands-on explore new items and discuss what’s going on in the market and with users. It is also the best moment to create or reaffirm the shared understanding of the participants whether the product backlog still reflects the needs of the users/customers and the market and to make changes to the product backlog in order to maximize the value delivered. Transparency, next to inspection and adaptation, is key for empirical process control, and therefore calling the event “sprint demo” and focusing just on a one-sided presentation without hands-on use of the product misses the point of the sprint review in Scrum or LeSS.

Sprint Review in Scrum

The 2017 Scrum Guide is quite explicit on the contents of the sprint review. It includes the following elements (quote):

- Attendees include the Scrum team and key stakeholders invited by the Product Owner;

- The Product Owner explains what product backlog items have been “done” and what has not been “done”;

- The development team discusses what went well during the sprint, what problems it ran into, and how those problems were solved;

- The development team demonstrates the work that it has “done” and answers questions about the increment;

- The Product Owner discusses the product backlog as it stands. He or she projects likely target and delivery dates based on progress to date (if needed);

- The entire group collaborates on what to do next, so that the sprint review provides valuable input to subsequent sprint planning;

- Review of how the marketplace or potential use of the product might have changed what is the most valuable thing to do next; and,

- Review of the timeline, budget, potential capabilities, and marketplace for the next anticipated releases of functionality or capability of the product.

The result of the sprint Review is a revised product backlog that defines the probable product backlog items for the next sprint. The product backlog may also be adjusted overall to meet new opportunities.

The 2020 version of the Scrum Guide is much shorter on the sprint review, as in general, it is shorter and less prescriptive. The new Scrum Guide says:

During the event, the Scrum team and stakeholders review what was accomplished in the Sprint and what has changed in their environment. Based on this information, attendees collaborate on what to do next. The product backlog may also be adjusted to meet new opportunities. The sprint review is a working session and the scrum team should avoid limiting it to a presentation.

Sprint review in LeSS

LeSS (like Scrum) is a “barely sufficient” framework for high-impact agility. It addresses the question of how to apply Scrum with many teams working together on one product. For more on the LeSS framework please visit https://less.works.

In LeSS there is one rule regarding the sprint review (quote):

- There is one product sprint review; it’s common for all teams. Ensure that suitable stakeholders join to contribute the information needed for effective inspection and adaptation.

In addition, there are two guides and a couple of experiments related to the sprint review:

Guide: adapt the product early and often

This guide suggests keeping the cycles for creating new product slices as short as possible (and reasonable), sharing as much information about the customer market as possible, and discussing together what to do next, in order to maximize learning.

Guide: review bazaar

This guide suggests performing the first part of sprint review like a science fair: A large room with multiple areas, each staffed by team representatives, where the items developed are explored and discussed together with users, teams, etc. Beware: The review bazaar is not the full sprint review but only the first part! The second part of the sprint review consists of the critical discussions needed to reflect and decide on what to do next.

The guide provides some clear tips on how to organize the bazaar in a series of steps (quote):

- Prepare different areas for exploring different sets of items. Include devices that are running the product. Team members are at each area to discuss with users, people from other teams, and other stakeholders. Learning happens both ways! Include paper feedback cards to record noteworthy points and questions.

- Invite people – including other team members – to visit any area.

- Start a short-duration timer (e.g. 15 minutes) during the exploration. The timer creates a cadence move-on to another area.

- While people hands-on explore the items and discuss together, record noteworthy points on cards.

- At the end of the short cycle, invite people to rotate or remain for another cycle. These mini-cycles help a more broad and diverse exploration of all items.

After the bazaar, the most important steps of the overall meeting follow:

- People sort their feedback and question cards so that the Product Owner sees important ones first.

- The Product Owner leads the discussion on the market and customers, upcoming business, market feedback about the product, and broadly what’s going on outside. For that the Product Owner uses feedback cards.

- Most importantly for the entire Review, there’s a discussion – and perhaps a decision – about the direction of the next Sprint.

The second LeSS book provides some experiments related to the sprint review, like using video sessions, including diverge-converge cycles in large video meetings or experimenting with multisite Scrum meeting formats and technologies. In this article I put my focus on a detailed example of the experiment “remote sprint review” and als combine many ideas from other experiments.

Remote sprint review in action

A LeSS rule related to the LeSS structure states that “Each team is (1) self-managing, (2) cross-functional, (3) co-located, and (4) long-lived”.

In current times, almost no team can work co-located, as every member is forced to work (most of the time) from home. So many teams are experimenting with different ways on how to do LeSS events remotely. Here is an approach of a review bazaar that we have done at one of my clients, Yello Strom AG. In the following description, I will share the steps that we used, and afterward, I will share what I still believe can be improved.

The organisational context

I would like to start with an important remark regarding the organizational setup: We did not use LeSS at this client, as the goal of the area I worked with was a bit different than the system optimising goal in LeSS. Nevertheless, our Sprint Review with multiple teams was very similar to how I would run a sprint review in LeSS.

In order to be very specific in the way we have performed the sprint review, I will give a brief introduction to the organizational setup, which is different from LeSS, but beware that I would not recommend this setup if you are working with multiple teams on one product. At the end of the article, I will describe the differences in the way I would perform the sprint review in LeSS.

The client, Yello Strom AG, is a German electricity and gas provider, a subsidiary of EnBW. The area that I worked with at Yello Strom AG was responsible for innovation and discovering new business models. It consisted of 4 teams, each focusing on a different product.

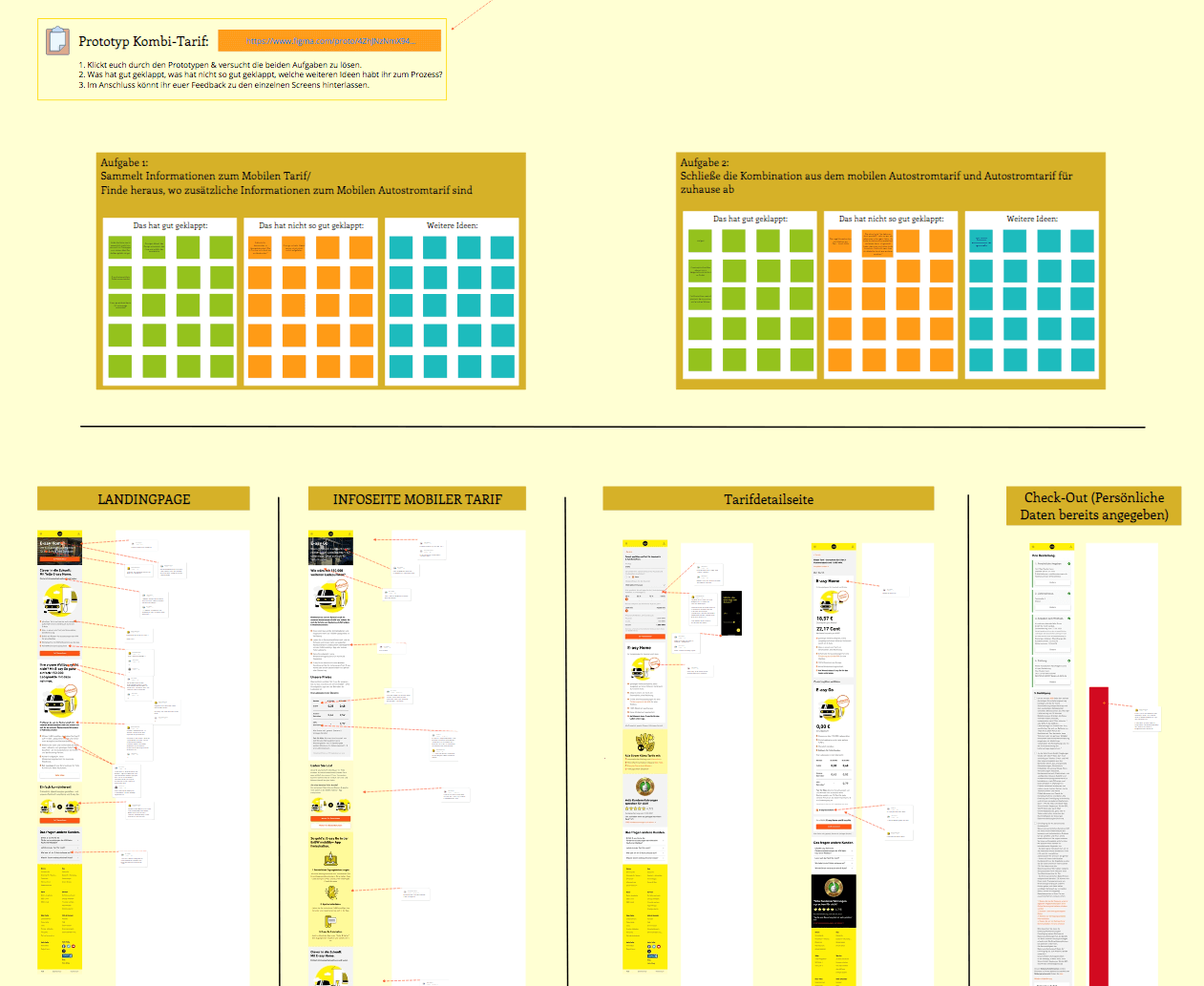

There was one team that was creating a revolutionary app that allows people to understand the difference between driving an internal combustion engine car and an electric car. This app was designed to be able to recommend an electric car that fits your personal needs, taking into consideration how often you are driving each week, what kind of trips you are regularly doing, if you have a charging possibility at home, and many other things.

Another team was working on a user-generated platform that should allow people to share personal reviews of electronic products that they are using. A third team was setting up a new electricity pricing model for people with electric cars. And the fourth team was writing and creating content for different (media) channels, like blogs, YouTube videos, Instagram, or Twitter posts.

Each team had a Product Owner and the department had a so-called business owner. Each team had its own product backlog, some run 2-weeks sprints, other 1-week design sprints, but every 2-weeks we performed this shared sprint review.

Sprint review setup

The tool that we used as a group to communicate was Microsoft Teams, which unfortunately at that time didn’t support break out rooms, so we had to make some workarounds in this regard.

As most interaction between the participants should happen visibly, we were using Miro as an “endless” whiteboard. Here is where the agenda of the event was shared, where teams were preparing screenshots of the product parts they wanted to discuss in order to allow the participants to add comments and feedback, and also where other participant interaction happened.

One of the most essential parts of a remote sprint review is the preparation. Team members should always prepare the sprint review well, in order to get the most out of this inspect-and-adapt event. But, as we will see, for a remote event there is not only the product (increment) that needs to be prepared but also ways to allow participants to share feedback effectively. Our teams had to plan on average about an hour each sprint to prepare the Miro board for the sprint review to make this meeting effective. In addition, also the PO usually prepared relevant information to share with the teams and the other participants.

Here is how the overall board looked like for one of our sprint reviews:

You will see parts of the board in more detail below.

Agenda

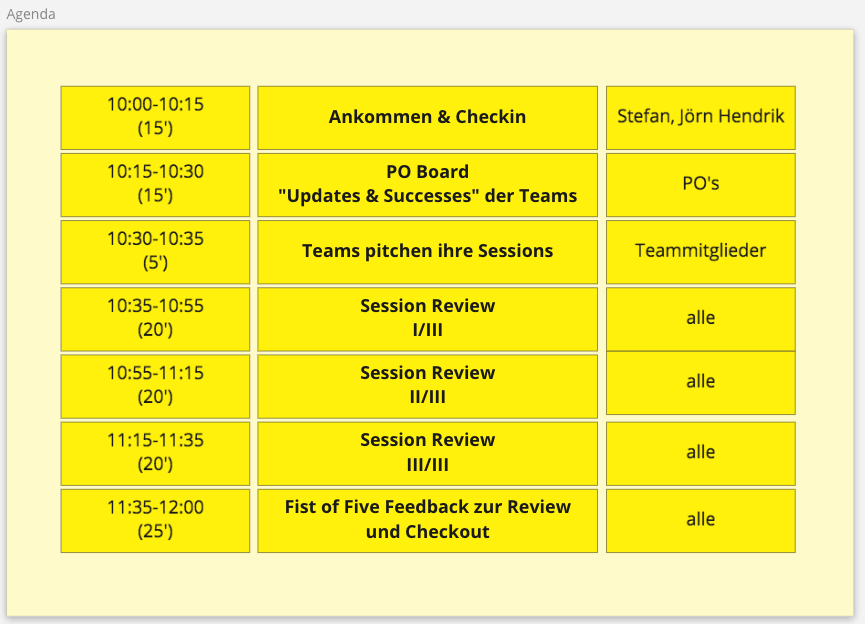

The next screenshot shows a typical Sprint Review agenda.

We had a time limit of no more than 2 hours, which worked for the most part, although sometimes I wish we had had a little more time. Our agenda consisted of 8 blocks:

- A short welcome and check-in.

- 10 min for company and department updates.

- A short status update on the state of the teams.

- The pitching of the teams.

- The (actual) review bazaar.

- The collection and summary of the feedback.

- The discussion of the next steps.

- A short feedback round on the sprint review itself.

We usually started our event by mentioning separately the steps that were done during the sprint review and introducing changes to the process if they were decided in the previous event or sprint retrospective. We found that participants who attended this event for the first time benefited from an individual introduction to the process beforehand. This allowed us to keep the first item on the agenda short. This is also a good time to do a quick check-in, with participants typing a word into the chat box, posting a Giphy or perhaps a picture in an area of the whiteboard, for example.

This was followed by a few words from the business owner, where he could announce important company and department updates or introduce new hires.

Team status and session pitching

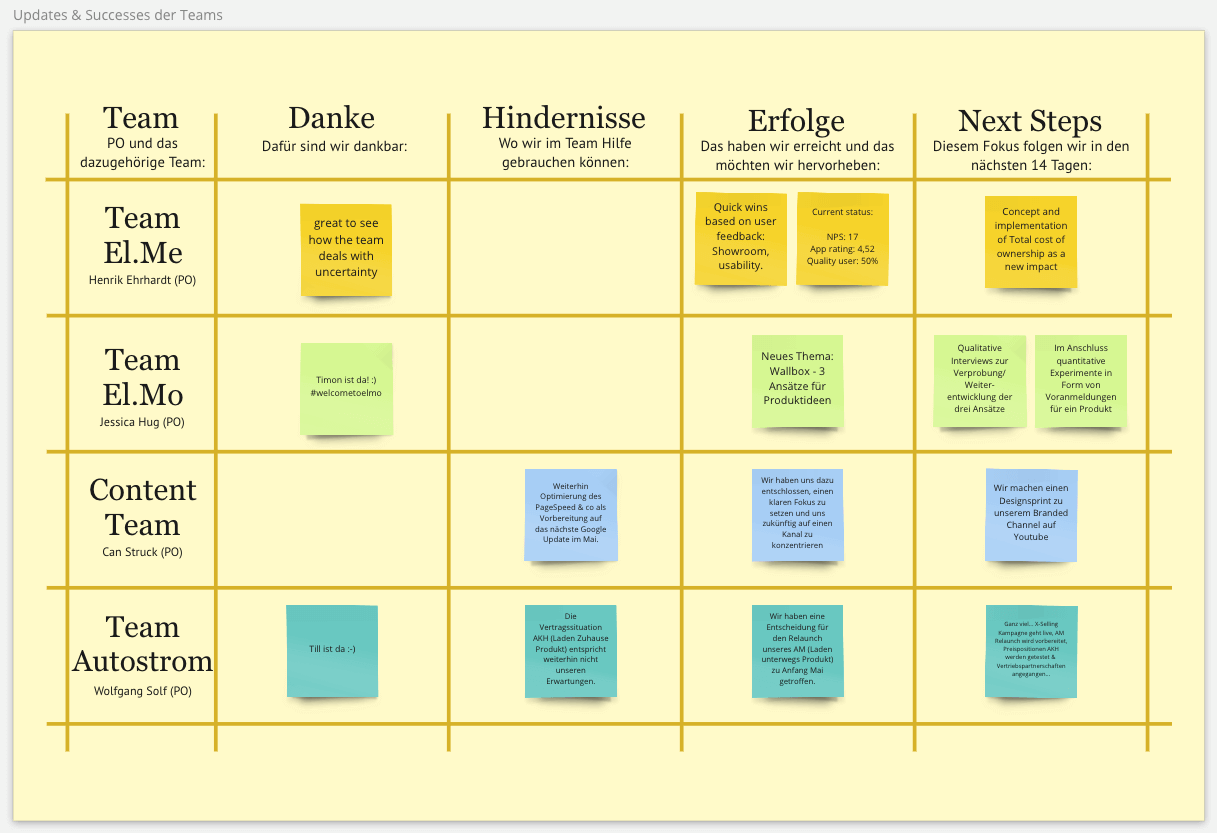

The third item on our agenda was a short status overview reflecting where the teams were on their respective journeys. As mentioned earlier, we had 4 teams working on 4 different products and this was the opportunity for each team to verbally and visually share what was happening: updates on usage figures, market updates, impediments and the road ahead. Mostly this was done by the team POs and took no longer than 5 minutes per team.

As you can see in the screenshot below, we created a table on the board for this with 4 columns (Obstacles & Help Requests, Successes, Thanks, Next Steps).

After that (or sometimes during the updates) each team gave a short introduction to the product increments that had been prepared to be explored in the review bazaar.

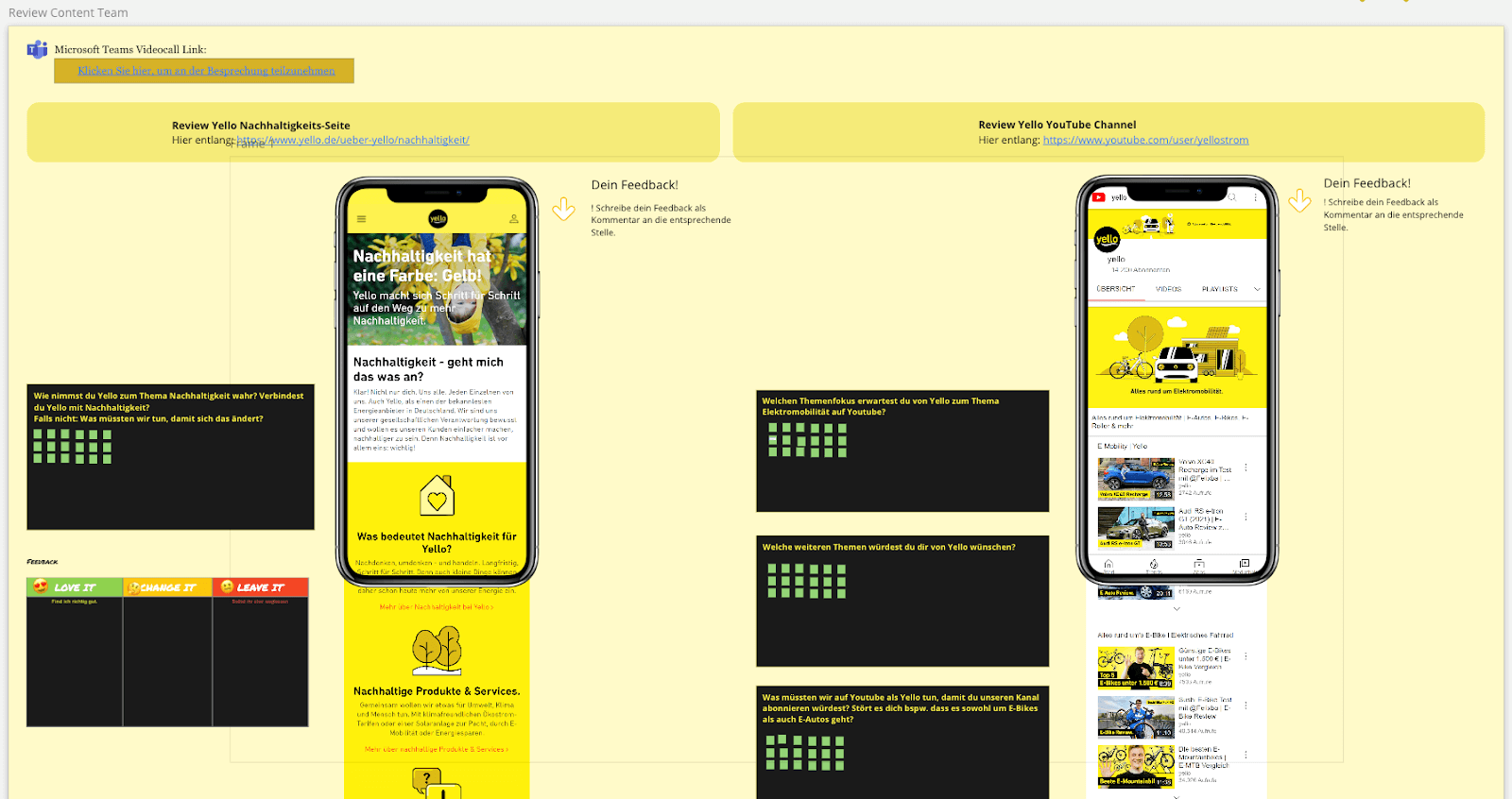

Each team that had something new to share prepared one or more sections on the board with instructions on where to find the product increments and how to review them. Sometimes the instructions were supplemented with key questions for feedback. Most of the time the teams added screenshots of the new or updated parts of the product (website or app). This made it very easy for participants to link the feedback to a specific area of the product. For general remarks and feedback each team also created a section with 4 quadrants (What worked? / What made me confused? / What didn’t work? / Any new ideas?).

The review bazaar

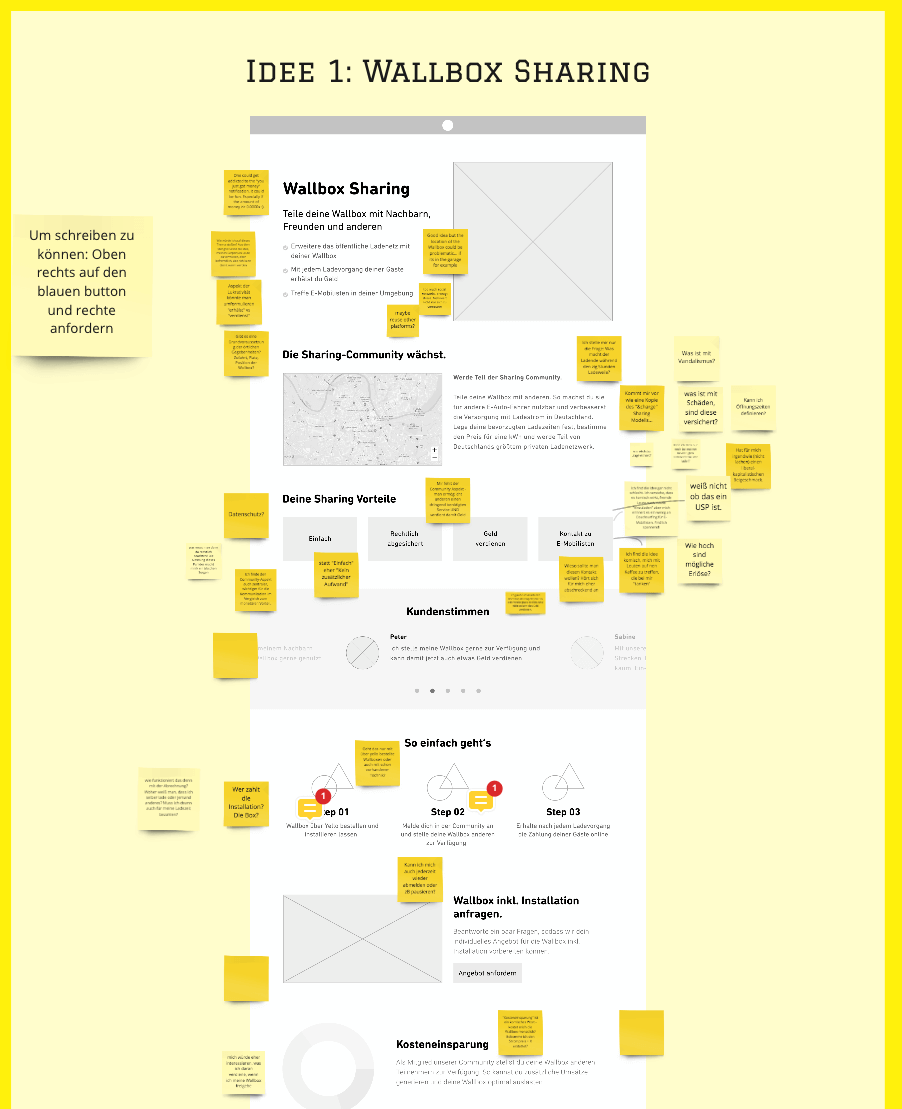

After about 45 minutes, the most exciting part of the review began. Participants started to move around the Miro board and explored the offerings. As mentioned earlier, the offerings were very diverse and the review was designed so that participants could choose which parts were most interesting to them and where they could contribute the most by rating what they saw and giving feedback.

The Content Team usually shared articles or YouTube videos that had been created and published in the sprint. Next to the link to the actual article, the team added a screenshot and a feedback quadrant (see screenshot below).

In other cases, teams added a link to a new functionality on a web page or a whole new landing page. This could either be a link to the live system or to another (staging) environment, and general instructions for access were added.

As mentioned earlier, the feedback team usually prepared a section with screenshots of the new functionality or a web page and participants added post-it notes with comments right next to the sections. In addition to this, each board had a feedback quadrant for general feedback.

In some cases, it was a new functionality in a mobile app that was available for review. Here, teams usually prepared a more detailed description of how to install the app from a test server, sometimes by posting a link to a screen shot that included step-by-step instructions on how to install it. There was also a link to an MS Teams channel or a team member in Slack who could support participants if they had problems installing the app. Again, the team provided relevant screenshots from the app on a frame in Miro so that participants could write post-its directly to the areas they had feedback on. There was also a feedback quadrant for more general feedback.

In other cases, there were just mockups, wireframes, scribbles or even data sheets that the team had been working on in the previous sprint. Also here they gave clear instructions on what type of feedback they needed.

In some cases, teams even prepared customer interviews for the sprint review. They asked participants to write their name in a timebox slot, and a team member who was skilled at conducting customer interviews had one or more participants experience the new functionality and answer questions.

Feedback review and backlog adjustment

The next step was to review and discuss the participant feedback. To do this, each team sat down with their product owner in their MS Teams channel and reviewed the post-its and discussed the written feedback as well as what they had observed during the interviews. This usually resulted in new PBIs being created and discussed in the next product backlog refinement, as well as PBIs being updated or reordered in the product backlog.

Sprint review feedback

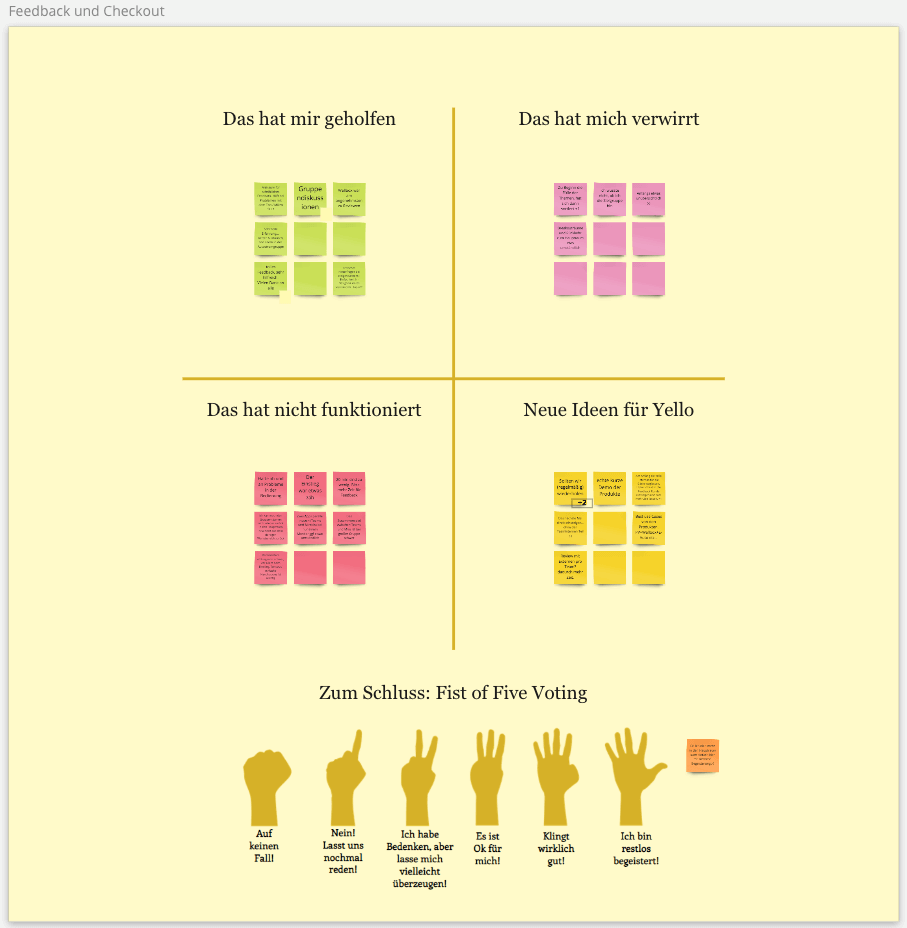

The last step of our sprint review was a short time slot to retrospect the sprint review. The participants had the opportunity to leave feedback and ideas on how to improve the sprint review. In order to enable this, we used another area of the board, and the familiar four quadrants.

Suggestions for improvement

As mentioned already, this is not a typical LeSS sprint review, though the flow used might be very similar to the one in a LeSS sprint review. I will start by describing first the differences to LeSS.

How is LeSS different from this approach?

Large-Scale Scrum (LeSS) offers, through its rules and principles, an organisational design that is optimised for adaptability at minimum cost and customer value. In LeSS, there are no “Team Product Owners” and no “Team Product Backlogs”. To avoid local optimisation by increasing efficiency at team level, there is a common product backlog. This ensures that optimisation takes place at system level. Usually this makes most sense when multiple teams are working on a product, creating a clear need for all teams to work together to support the development of the area of the product that is most critical for the best possible customer impact. And even in a situation where multiple teams are working on multiple products in parallel, LeSS prefers to work with a product backlog (and with a single product owner maintaining that product backlog) and broader product definitions because they

- lead to a better overview of the development and the product,

- avoid dependencies through feature teams,

- encourage collaborative thinking with the customer, focusing more on the real issues and impacts than on the desired requirements,

- avoiding the development of duplicate functionality, and

- create simpler organisations.

So, as long as there is a shared (overall product) vision that encompasses multiple products, the same customers or the same markets, and the same domain knowledge is relevant, there is a strong case for extending the product definition to multiple smaller products.

In our case, the department was exploring multiple innovative products with multiple teams, so working with a single product backlog would have made a lot of sense, especially because the exploration and delivery of the products would have been extremely fast and adaptable. It would have allowed the organisation to increase investment in the more successful product on the fly, rather than working with a defined group of people for a defined period of time on multiple products, only to discard some products at a certain point and train team members to develop the more successful product.

In the concrete example, the products were very different in terms of the technology used and most of the team members were contractors, so working with several product backlogs and several product owners (i.e. without LeSS) also worked well. The interaction between the teams was very good, the efficiency at team level was high and the most promising product could be identified.

How to run the remote sprint review differently in LeSS?

In a real LeSS setup, you might want to run a few things differently. After check-in and before teams pitch their sessions, you could have just one block where the product owner communicates relevant changes in usage numbers, specific customer feedback and updates from the market.

You could include an area on the board where important numbers and other success stories (e.g. go-live of a functionality) are shared, but I would remove both the “impediments” and the “next steps”, or at least I wouldn’t discuss them at the beginning of the sprint review. The best time to discuss impediments would be during an overall retrospective (with all teams) and I prefer to discuss next steps at the end of the sprint review and incorporating all the feedback from it, not at the beginning.

After the remote review bazaar, the teams sort and group the input they received and summarise it. The product owner familiarises himself with this summary by moving back and forth between the areas on the Miro board or between the teams’ breakout rooms. Then all teams discuss with the product owner what feedback should be incorporated in the upcoming sprints and how this can be done. Often this leads to the creation of new items that will be defined in the next backlog refinement, the change of priorities of existing items or even the removal of items. This step in the sprint review is very important because it sets the direction for the development. It is advisable to plan enough time for this in sprint planning.

In my experience, the retrospective of the sprint review can be shortened by only providing a section for feedback on the board, or even omitting this section completely, and discussing the feedback in an overall Retrospective if needed.

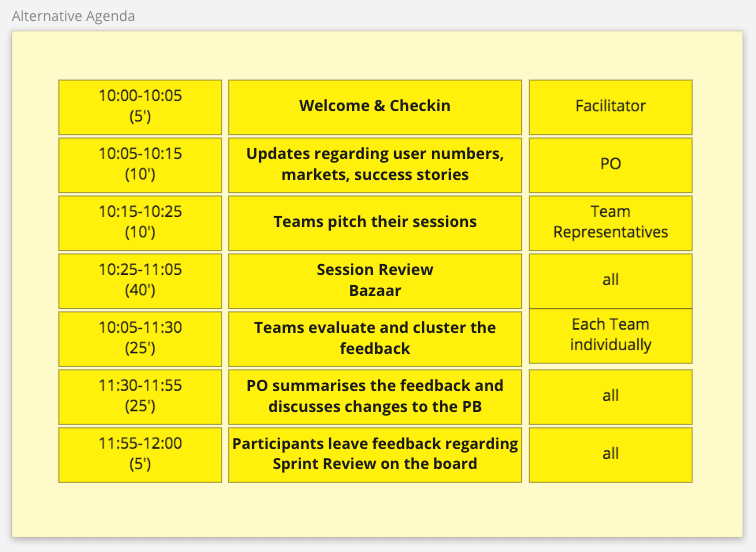

Here you can see what an agenda might look like:

I hope this article gives you an idea how to facilitate a remote sprint review with multiple teams. I’d love to hear your feedback and/or questions.

Notes:

Robert Briese regularly takes participants of his pCLP (Provisional Certified LeSS Practitioner) courses to such a Sprint Review, so if you are interested in experiencing this event live you can join one of his courses or feel free to write him. You can read more about him at https://less.works/profiles/robert-briese or on his company website https://www.leansherpas.com.

Robert Briese has published three more posts on the t2informatik Blog:

Robert Briese

Robert Briese is a coach, consultant and trainer in agile and lean product development and the founder and CEO of Lean Sherpas GmbH. As one of only 22 certified Large-Scale Scrum (LeSS) Trainers in the world he works with individuals, teams and organizations on adopting practices for agile and lean development as well as improving organizational agility through cultural change. He worked with (real) startups (Penta), Corporate Start-Ups (Ringier, Yello) and also big organisations (SAP, BMW, adidas) to create an organisational design and adopt practices that allows faster customer feedback, learning and a greater adaptability for change. He is a frequent speaker on conferences and gives regularly trainings in Large-Scale Scrum (LeSS).